Summary for Complex Scenes

Handling complex scenes is the ultimate stress test for AI image generators! Here is a concise overview of how the top models fared when juggling crowds, multiple focal points, and diverse elements:

🏆 Top-Performing Models

📈 Major Trends

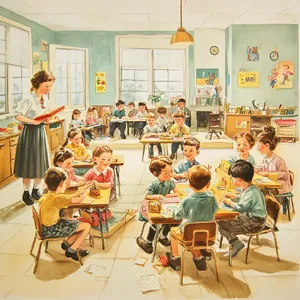

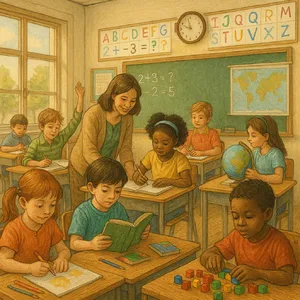

- The Gibberish Penalty: The most common cause for a dramatic score drop was nonsensical AI text appearing on signs, banners, and chalkboards in the background of busy streets or classrooms.

- Subject Dropping: When overwhelmed by a prompt, models will silently delete subjects. For example, many models entirely forgot to include the zebras in the African Savanna prompt.

😲 Surprising Discoveries

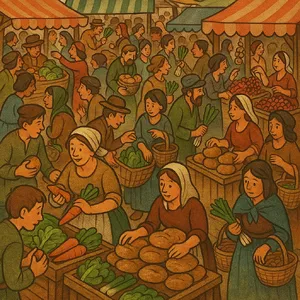

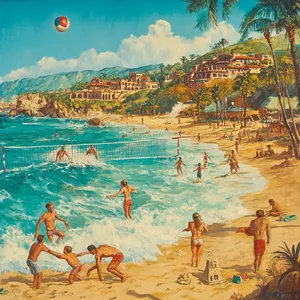

- Style Rebellion: Highly anticipated models like Midjourney v7 and DALL-E 3 frequently scored surprisingly poorly (3s and 4s) because they aggressively hallucinated stylized aesthetics (like pixel art or oil paintings) instead of producing realistic photography!

📊 General Analysis & Useful Insights

Evaluating complex scenes reveals the true limits of current AI architectures. Here is a deep dive into the patterns, strengths, and failure modes across the tested models.

💪 Comparative Strengths

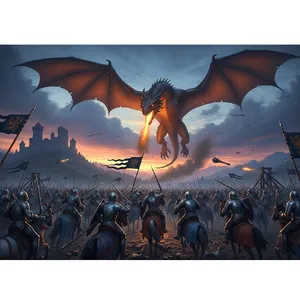

- Atmospheric Mastery: Almost all top-tier models excel at volumetric lighting and depth. Scenes like the Medieval Battlefield and Underwater Reef showcased brilliant handling of mist, smoke, water reflections, and light rays.

- Concept Blending: Models are getting exceptionally good at semantic blending. In the Astronaut and Diver prompt, models successfully juxtaposed space and deep-sea gear without blurring their distinct design languages.

🚧 Common Failure Modes

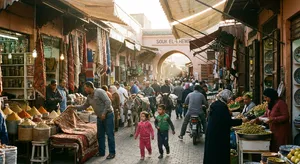

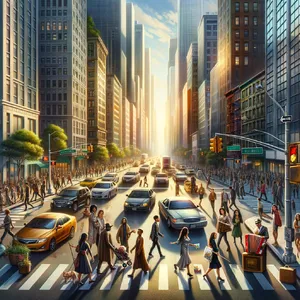

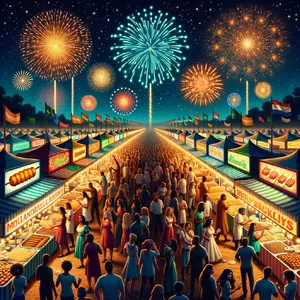

- The Typography Trap: Rendering text in busy scenes remains a massive hurdle. Models like Flux 1.1 Pro Ultra generated beautiful compositions but were severely penalized for plastering gibberish on storefronts in the City Intersection.

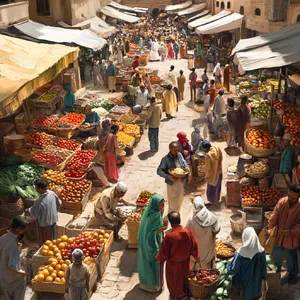

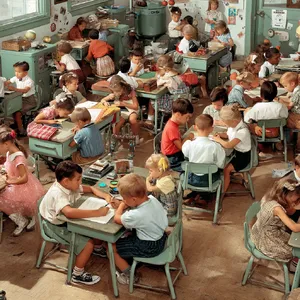

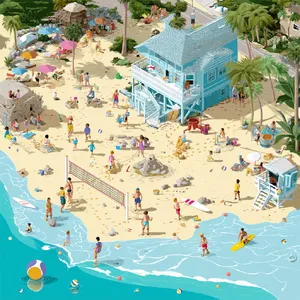

- Crowd 'Melting': While foreground subjects usually look flawless, mid-to-background characters frequently suffer from distorted, melted faces and fused limbs. This "AI sheen" was incredibly obvious in the Bustling Market evaluations.

- Logical Hallucinations: AI still struggles with physical logic. A prime example is DALL-E 3 generating non-divers casually standing on the deck of a submerged ship in its Underwater Wreck generation.

🎯 Quality Factors of Top Performers

The models that consistently scored 8s, 9s, and 10s distinguished themselves by keeping background noise coherent. Rather than generating blurry blobs for crowds, they retained structural integrity for secondary characters and correctly sized environmental details.

🎯 Best Model Analysis by Scenario

Different use cases require completely different strengths. Here is a breakdown of which models to use based on your specific scene requirements:

🌆 Crowds & Urban Environments

🧑🍳 Human Interaction & Specific Tasks

🐉 Fantasy & Sci-Fi Integration

- Top Picks: Ideogram V2 and Midjourney V6.1

- Why: If absolute photorealism isn't your strict goal and you want breathtaking artistic flair, these models thrive on the Medieval Battlefield. They compose epic, cinematic shots with brilliant color grading that rival professional concept art.

🦁 Wildlife & Nature Compositions

- Top Pick: Flux 2 Pro

- Why: Juggling multiple species without them morphing into mutant hybrids is incredibly tough. In the African Savanna test, this model successfully kept elephants, lions, zebras, crocodiles, and flamingos structurally sound and anatomically distinct.